FastAPI vs Flask vs Django

Which One Should You Use for LLM Apps?

Let’s skip the theory.

If you’re building with LLMs — RAG systems, chatbots, assistants, or AI-powered APIs, the framework you choose matters more than you think.

Yes, they all “work.”

But the dev experience, performance, scalability, and integration pain differ wildly.

I’ve used all three across real-world AI projects.

Here’s what I’ve learned 👇

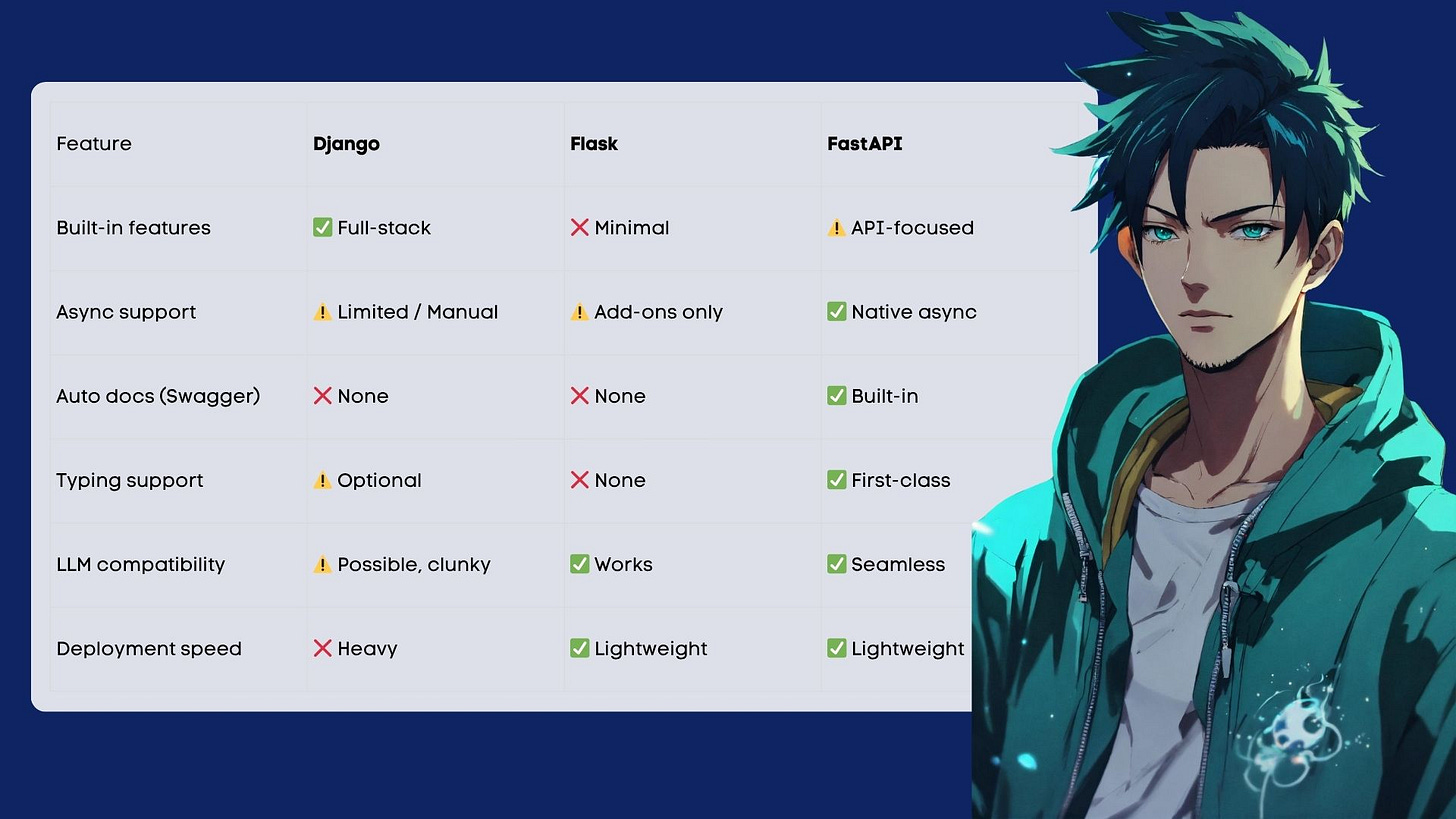

Frameworks compared at a glance.

If you’re building an LLM API.

You want low latency, async, and type safety.

FastAPI wins here — every time.

Native support for

async def= non-blocking I/OPydantic validation means your input/output schema is tight

Swagger + OpenAPI docs out of the box

Plays well with LangChain, Transformers, OpenAI SDK

Best for:

→ Custom LLM endpoints

→ Chatbot APIs

→ Embedding + search pipelines

→ ML inference services

If you’re building an end-to-end app around LLMs

Let’s say you’re building:

A document Q&A tool

A multi-user AI dashboard

A tool where LLMs are just one feature

Django starts to shine here.

Why?

Comes with user auth, admin panel, ORM, views

Good for quickly wiring business logic, users, and UI

Add

DRF(Django Rest Framework) for APIsCan plug into Celery for background tasks (e.g., async LLM processing)

But be warned:

Django + LLMs = more setup, more complexity.

It works, but you’ll fight the framework sometimes.

Best for:

→ SaaS tools

→ AI platforms with login/payment/etc.

→ Use cases where LLM is a feature, not the product

If you want control (but lightweight)

Flask sits in the middle.

It’s:

Lightweight

Battle-tested

Minimal to the core

But it doesn’t scale elegantly unless you build the glue yourself.

Want async? You’ll need Quart or hacks.

Need auth? Add it.

Need docs? Write them.

Still, for simple LLM apps, it’s more than enough.

Best for:

→ Solo projects

→ One-off LLM demos

→ Lightweight tools or microservices

TL;DR — Which one to use?

Build + deploy fast LLM APIs → FastAPI

Build a complete product with LLM inside → Django

Quick demo or solo MVP → Flask

Some real-world examples.

1. FastAPI + OpenAI SDK

→ Custom chatbot API with streaming support

→ 20ms faster response time than Flask in production

→ Auto docs + Pydantic = saved time debugging prompts

2. Django + LangChain + Postgres

→ AI dashboard with user logins and document uploads

→ Used Django admin to review logs, users, and feedback

→ Celery queue for async LLM processing

3. Flask + Hugging Face Transformers

→ Deployed a small summarization service on EC2

→ Flask + Gunicorn = simple and stable

→ Perfect for a one-function tool

And here’s my take.

In 2026, AI engineers need frameworks that don’t slow them down.

For me, the stack looks like:

FastAPIfor buildingDjango,when I need structureuvfor envsDocker+Fly.ioorRenderfor deploymentObservability from Day 1

Your framework should fit your product, not your nostalgia.

Want more breakdowns like this?

Every week, I send out practical posts like this:

→ Building LLM systems

→ Tools that actually work

→ Dev workflows that scale

→ No hype. Just how it works.

Let’s stop building demos.

Let’s build systems that last.

Hey, great read as always. Your LLM insights are gold! But Flask offers a nice midle ground to.